Note: This article is only available in English

Why Telco Transformations Fail at Testing

And How to Fix It

Highlights, Tech // Werner Willms (CTO) // 07.05.2026

Telco transformation is often framed as a technology journey: modernising legacy BSS, simplifying OSS, exposing APIs, adopting cloud-native patterns, and accelerating digital delivery. All of that matters. But technology change alone is not the outcome operators are actually measured against. The outcome is operational and commercial performance: launching change faster, scaling it safely, and protecting customer trust while systems evolve.

That is why testing deserves far more strategic attention than it typically receives. Not testing as a late project phase, and not testing as a narrow tooling discussion. What matters is the organisation's ability to prove that a service journey still works when multiple systems, interfaces, policies, and downstream processes change at the same time.

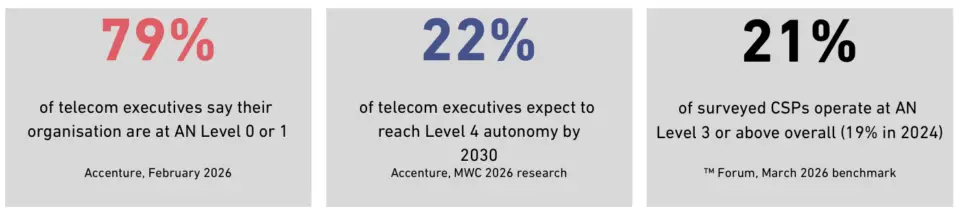

Consider the evidence. Industry analysis presented around Mobile World Congress 2026 confirms that the majority of telecom operators remain at the early stages of autonomous network maturity, despite years of investment in transformation programmes. TM Forum’s March 2026 benchmark report, Assessing CSPs’ progress towards Level 4 autonomous networks, confirms the direction: while a growing number of operators are validating Level 4 attainment in specific network domains, most CSPs remain at lower maturity levels overall. In telecom, one of the world's most complex software environments, that gap is not abstract. It appears in missed releases, production incidents, and eroded customer trust.

The Problem Is Not Lack of Testing. It Is Fragmented Testing.

A modern telco landscape is not a neat stack. It is an accumulation of critical domains with different lifecycles, different data models, and different operational constraints. BSS platforms manage products, orders, and billing. OSS environments orchestrate provisioning and service activation. API layers expose capabilities to digital channels and partners. Network-facing systems add another layer of dependency, timing, and failure risk.

Analyst estimates of the global OSS/BSS market in 2025 span roughly US$65–86 billion depending on methodology 1, reflecting both the scale of the investment and the complexity involved. Yet the way most organisations test these environments has not kept pace with the architectural reality.

The weakness in many transformation programmes is not that teams ignore quality. The weakness is that quality is often validated in silos. One team tests its application. Another validates an API. A third checks a downstream process. Each part may look stable in isolation, while the real customer journey remains unproven.

That matters because customers do not experience systems. They experience outcomes. If an order is captured correctly but not provisioned correctly, if a product change is reflected in one layer but not in billing, or if a release behaves as expected in one channel and breaks in another, the service experience fails. Traditional system-by-system QA does not reliably reveal that risk early enough.

Why This Moment Is Different

The pressure on software organisations is changing the testing equation, and the pace is accelerating. Gartner's strategic software engineering trends for 20252 identify AI-driven automation and future-ready engineering practices as core priorities, reflecting how deeply the delivery model itself is evolving.

For telcos, that shift is amplified by architectural complexity. The KPMG 2025 Global CEO Outlook for Technology & Telecommunications3 finds that 83% of TMT CEOs are confident in sector growth prospects. But the same research notes that the route to that growth runs directly through technology transformation. 62% of TMT leaders believe agentic AI will have a “transformational” or “significant” impact on their organisation, underlining that the transformation ambition is broadly shared.

TM Forum's work on Open Digital Architecture (ODA), future BSS, and cloudification4 consistently frames the target state as more modular, more automated, and more dependent on multiple systems functioning as a cohesive whole. PwC's Global Telecoms Outlook5 adds the commercial dimension: global telecoms industry revenue stands at $1.1 trillion, with 5G subscriptions projected to quadruple by 2028. Each of those subscribers will depend on systems that have been validated to work together, not just in isolation.

Taken together, these shifts fundamentally change the role of testing. It can no longer sit at the edge of delivery as a final checkpoint. If releases are more frequent, architectures more distributed, and dependencies more dynamic, then testing has to move closer to the operating rhythm of delivery. Otherwise the organisation creates a structural mismatch: continuous change on one side, phase-based validation on the other.

What Telco Testing Gets Wrong in Practice

A 2024 Appledore Research podcast discussion with Spirent on ‘lab-to-live’ testing6 captures the challenge precisely: testing must evolve from a siloed function into a continuous process spanning vendor supply chains, in-house QA, and live network validation. Yet in most programmes, testing still follows an implicit assumption: if each critical component has been tested, the end-to-end journey will probably work. That assumption is expensive.

Consider the cost dimension. The Consortium for Information & Software Quality (CISQ) estimated the total cost of poor software quality in the US, in its 2022 biennial report, at $2.41 trillion7, driven by failed projects, legacy system failures, and operational incidents. In telecom, where a single misconfigured billing rule can affect millions of subscribers, the exposure is concentrated and severe.

The failure patterns that appear most frequently across European telco programmes are consistent:

- A product definition is updated in one system, but the downstream order handling logic is not aligned.

- An API response remains technically valid, but a consuming application interprets the payload differently after a release.

- Provisioning completes successfully, but billing logic lags behind configuration changes or edge-case handling.

- A migration preserves core scenarios, yet exception handling, fallback flows, or partner-facing processes behave differently at scale.

- Cross-domain journeys (order-to-activate, change-of-tariff, trouble-ticket resolution) appear stable in unit tests but fail at the handover between BSS, OSS, and network layers.

These are not rare exceptions buried deep in the system landscape. They are the kinds of failures operators encounter repeatedly in large-scale transformation programmes. Fragmented QA creates false confidence. It produces local proof, not systemic proof.

The Automation Trap - And How to Avoid It

Many organisations react to these pressures by investing in automation. That is necessary, but it is not sufficient. Capgemini’s World Quality Report 2025–26 finds that despite sustained investment in automation tools, automation maturity remains uneven across enterprises, with 60% of organisations citing challenges around secure, scalable test data and 58% reporting barriers to AI-tool adoption8. Adoption of automation tooling has accelerated; coherent automation strategy has not kept pace.

Automation without orchestration often reproduces the same fragmentation in a faster and more expensive form: multiple automated islands, each efficient in isolation, but still disconnected at the decision level. Tests run across different systems, environments, protocols, and release stages, but results do not map back to business journeys, and defects are not traceable in a way that supports fast action.

The harder challenge is coordination. This is where platform thinking becomes essential. A telco-grade quality approach is not just a set of scripts. It is a way to orchestrate validation across domains, standardise evidence, and preserve visibility when systems change at different speeds.

From System Validation to Journey Validation

The more useful question is not: “Did each system pass its test cases?” The more useful question is: “Can the operator still execute the business journey correctly, repeatedly, and transparently under real delivery conditions?”

That shift changes everything. It moves the quality conversation:

A true telco end-to-end test does not stop at a screen or an API call. It follows the transaction through the full commercial and operational chain: from product selection and order capture, through orchestration and provisioning, into activation, billing, and customer communication.

Only when those connected steps work reliably together under real delivery conditions can a release be considered trustworthy.

Where AI Fits - And Where It Does Not

AI is already reshaping software engineering and testing. Gartner’s strategic trends for software engineering9 identify AI-driven automation as a core priority for 2025 and beyond, and predict that by 2028, 90% of enterprise software engineers will use AI code assistants. The implications for testing are real: faster test design, improved prioritisation, anomaly detection, pattern recognition, and more efficient result analysis.

Yet data from the TM Forum / AWS Generative AI Maturity Interactive Tool (GAMIT, 2024) is a useful reality check: only 25% of CSPs currently feel equipped to leverage advanced AI techniques10, and just 16% are confident in using AI to optimise cost and ROI. The operational lesson is easy to overlook: AI amplifies the quality model that already exists. It does not compensate for a fragmented one.

If the testing architecture is fragmented, AI scales fragmentation. If the quality model is coherent, built around journeys rather than components, AI can scale insight, speed, and precision. That distinction matters enormously for operators investing in AI-powered delivery pipelines.

Why OSS/BSS Modernisation Demands a New Testing Model

TM Forum's work on Open Digital Architecture11 emphasises that new telecom business opportunities depend on systems being able to adapt within increasingly automated, multivendor ecosystems. In practice, hard evidence of faster process resolution following OSS/BSS modernisation programmes has historically been scarce, and most gains remain fragile until they can be validated end-to-end.

This is particularly relevant in cloudification and API exposure programmes. Catalog changes, partner logic, phased migrations, and microservices decomposition all increase the number of moving parts that must stay aligned. The risk is not merely that one platform fails. The risk is no longer just that a single platform fails. The greater challenge is that, as architectures become more modular and distributed, it becomes significantly harder to see whether the overall service journey is still behaving correctly.

As a TM Forum member, Tallence is deeply engaged in how the industry is reframing these standards. The Open API catalogue, the ODA component framework, and the Autonomous Networks maturity levels are not abstract targets: they are the architecture against which release quality must be validated. An E2E testing strategy that is not aligned with TM Forum standards is not truly fit for purpose in a modern telco environment.

How to Fix It: Five Practical Shifts

There is no single fix for this complexity, but there is a clear direction operators can take. Based on our work with European telecom operators, five shifts define the difference between a QA function that constrains transformation and one that enables it:

- End-to-End Visibility. Replace local confidence with systemic proof. Define your business-critical journeys (order-to-activate, change-of-tariff, billing lifecycle) and validate them across all systems, not inside each one.

- Repeatable Automation. Move from regression by manual effort to automated test suites that run with every release. Target 60–70% automation coverage of critical paths; use AI to prioritise high-risk areas.

- Evidence-Based Releases. Replace status updates with structured test evidence. Every release gate should be backed by centralised, comparable results, not scattered screenshots and manually compiled reports.

- Platform Orchestration. Connect your test tooling. Tests need to run across systems, protocols, and environments as coordinated sequences, not as isolated scripts owned by individual teams.

- Business-Outcome Validation. Measure whether journeys work, not whether components pass. The question is not 'Did the API respond?' It is 'Did the subscriber activate correctly, on time, with the right billing behaviour?'

Conclusion

Telco transformations do not fail only because architectures are complex. They fail because organisations cannot reliably prove that change remains correct across the full service journey. That is the real testing challenge in telecom, and it is becoming more acute, not less, as release velocity increases and system architectures become more composable.

The data is clear. TM Forum's Autonomous Networks research shows the industry is far earlier in its automation maturity than the transformation ambition implies.12 Accenture’s MWC 2026 research confirms that most operators remain stuck at the early autonomy levels despite sustained investment. And industry discussion around ‘lab-to-live’ testing points precisely at the gap: testing that is still siloed by domain cannot validate the journeys that matter most.13

The operators that solve this will not just improve QA metrics. They will reduce release risk, shorten the path from change to execution, and protect customer trust while transformation is still underway. End-to-end testing is not a secondary workstream in telco transformation. It is one of the disciplines that ultimately determines whether transformation delivers measurable operational value - or remains an unfinished architectural ambition.

Meet Our Experts

Whether you are reassessing your QA setup, scaling automation, or aligning testing with TM Forum architectures. We'd love to explore where measurable impact can be achieved quickly.

Discover more here and book a meeting with our Experts

We will also be attending Digital Transformation World 2026 in Copenhagen. Feel free to connect with us there as well.

About the Author

Werner Willms is Chief Technology Officer at Tallence AG, responsible for product strategy and engineering across the firm’s telco and digital practice areas. With a career spanning BSS/OSS transformation, test automation architecture, and cloud-native platform engineering, Werner advises European telecom operators on quality strategy, release engineering, and the transition from manual to AI-assisted end-to-end validation.

Footnote

[1] Analyst range for 2025 varies by methodology and scope: Straits Research estimates US$78.39 billion; IMARC Group US$65.81 billion; Future Market Insights US$85.7 billion. No single industry-wide consensus figure exists.

[2] Gartner: Top Strategic Trends in Software Engineering for 2025 and Beyond, press release, 1 July 2025.

[3] KPMG 2025 Global CEO Outlook – Technology & Telecommunications (11th edition, based on 1,350 CEOs, survey period 5 August – 10 September 2025): 83% of TMT CEOs confident in sector growth prospects; 62% of TMT leaders say agentic AI will have a “transformational” or “significant” impact on their organisation.

[4] TM Forum: Future BSS, building new customer-focused strategies; BSS/OSS Cloudification Guide Suite; Open Digital Architecture (ODA) framework documentation.

[5] PwC, Global Telecoms Outlook, press release dated 28 February 2025 (ahead of MWC Barcelona): global telecoms industry revenue rose 4.3% in 2023 to US$1.1 trillion; 5G subscriptions projected to more than quadruple from 1.79 billion in 2023 to 7.51 billion in 2028.

[6] The Appledore Research Podcast, Episode 42: “Transforming Test from Lab to Live”, 30 December 2024 (interview with Anil Kollipara, VP Product Management Test Automation at Spirent). The “lab-to-live” terminology originates in Spirent’s positioning and is discussed, not authored, by Appledore.

[7] Consortium for Information & Software Quality (CISQ): “The Cost of Poor Software Quality in the US: A 2022 Report”, biennial report, published 6 December 2022 (author Herb Krasner). Estimated total cost: US$2.41 trillion; accumulated technical debt approx. US$1.52 trillion.

[8]Capgemini World Quality Report 2025–26 (17th edition): 60% of organisations cite challenges around secure, scalable test data; 58% cite barriers to AI-tool adoption.

[9] Gartner, “Top Strategic Trends in Software Engineering for 2025 and Beyond”, press release, 1 July 2025. Gartner predicts that by 2028, 90% of enterprise software engineers will use AI code assistants, up from less than 14% in early 2024.

[10] TM Forum & AWS, Generative AI Maturity Interactive Tool (GAMIT), launched September 2024, based on surveys of 200+ AI decision-makers at CSPs: only 25% of operators feel equipped to leverage advanced techniques (fine-tuning, RAG, prompt engineering); only 16% confident in using these methods to optimise cost and ROI.

[11] TM Forum: Open Digital Architecture (ODA), Component Framework and Design Guidelines. Available at tmforum.org/oda. See also: ODA Canvas Specification, TM Forum IG1171.

[12] TM Forum, “Assessing CSPs’ progress towards Level 4 autonomous networks”, March 2026 benchmark report.

[13] The Appledore Research Podcast, Episode 42 (30 December 2024), interview with Spirent on “lab-to-live” testing. See footnote 6.

References & Source Notes

Sources are cited in footnotes throughout the article. Where a figure could not be verified against an openly accessible primary publication, the original reference has been replaced or removed in this version.

Accenture: Autonomous Networks maturity research, presented around Mobile World Congress 2026, Barcelona. Reported in Fierce Network, The Fast Mode and FutureNet World, March 2026.

TM Forum: “Assessing CSPs’ progress towards Level 4 autonomous networks”, benchmark report, March 2026. Available via inform.tmforum.org (registration required).

TM Forum & AWS: Generative AI Maturity Interactive Tool (GAMIT), launched September 2024. Based on surveys of 200+ AI decision-makers at CSPs; 25% feel equipped for advanced AI techniques; 16% confident in cost/ROI optimisation.

CISQ: “The Cost of Poor Software Quality in the US: A 2022 Report” (biennial report), published 6 December 2022. Total cost US$2.41 trillion; technical debt approx. US$1.52 trillion.

Gartner: Top Strategic Trends in Software Engineering for 2025 and Beyond. Press release, 1 July 2025.

Multiple analysts: OSS/BSS market size estimates for 2025 span approximately US$65–86 billion across Straits Research, IMARC Group and Future Market Insights; no single industry-consensus figure exists.

Industry surveys: Gartner: Top Strategic Trends in Software Engineering for 2025 and Beyond, press release, 1 July 2025. AI-driven automation and future-ready engineering as core priorities.

KPMG: 2025 Global CEO Outlook – Technology & Telecommunications, 11th edition (survey of 1,350 CEOs, August–September 2025). 83% of TMT CEOs confident in sector growth; 62% expect agentic AI to have transformational or significant impact.

PwC: Global Telecoms Outlook 2025–2029. Revenue and 5G subscription projections.

Capgemini: World Quality Report 2025–26 (17th edition). Quality engineering and test automation challenges.

Appledore Research: The Appledore Research Podcast, Episode 42: “Transforming Test from Lab to Live”, 30 December 2024. Interview with Spirent on “lab-to-live” testing (term originates in Spirent’s product positioning).

TM Forum: Open Digital Architecture (ODA) documentation; Future BSS reports; BSS/OSS Cloudification Guide Suite.

Gartner: Gartner: Top Strategic Trends in Software Engineering for 2025 and Beyond, press release, 1 July 2025. Prediction: 90% of enterprise software engineers will use AI code assistants by 2028.